The Superhuman Mathematician

Remind me in 1 year

This week, news broke that Christian Szegedy, a co-founder of XAI, and a legend in AI research, inventor of Batch Normalization and Adversarial Examples, had left OpenAI, and joined a little known startup called Morph Labs.

Morph bills itself as:

Morph Labs is building a cloud for superintelligence.

Infinibranch enables AI agents on Morph Cloud to snapshot, replicate, autoscale, test, debug, deploy, and reason about software at light speed.

Morph Labs

Of course, this is major news, as Elon gave founder shares to the early researchers at xAI, and had variously said that Grok would be “maximally truth seeking” and “uncover the secrets of the universe”.

What follows is a some twitter threads tracing what happened.

What is autoformalization?

The goal of autoformalization is to create a system that automatically learns to read natural language content and turns it into abstract, machine verifiable formalization

Christian Szegedy

To cut to the chase, using a software program called Lean which can verify mathematical theorems (think of it as a compiler for math), attached to a reasoning AI, the goal for these two component to form a system that can automatically formalize (certified will compile) math.

In the case of the ABC conjecture, Morph Labs came to a near solution using reasoners running in parallel. It did however require human mathematicians to structure the problem and formulate the questions, so it was not quite autoformalized.

Szegedy had written a 2019 paper proposing autoformalization and was a seed investor in Morph, and their progress nudged him to leave xAI.

On to the twitter posts, earlier in the week Christian laid out his thesis on the next steps for superintelligence:

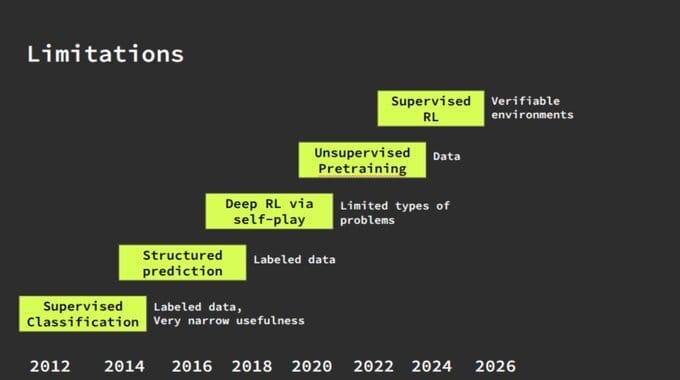

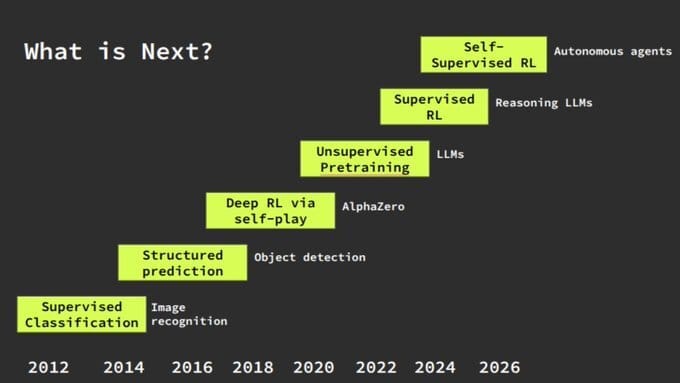

The history of AI from 2012 to present shows each paradigm solving previous limitations while revealing new ones. The next state-of-the-art will be Supervised RL for reasoning. It is fundamentally bottlenecked by the need for verifiable environments.

: Christian Szegedy

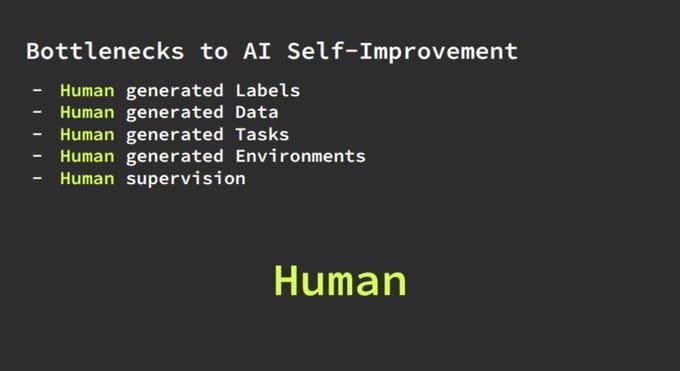

Current AI systems are completely dependent on human-generated labels, data, tasks, environments, and supervision. This human dependency creates an insurmountable ceiling on AI capabilities regardless of compute scale.

: Christian Szegedy

What Does the Machine Want?

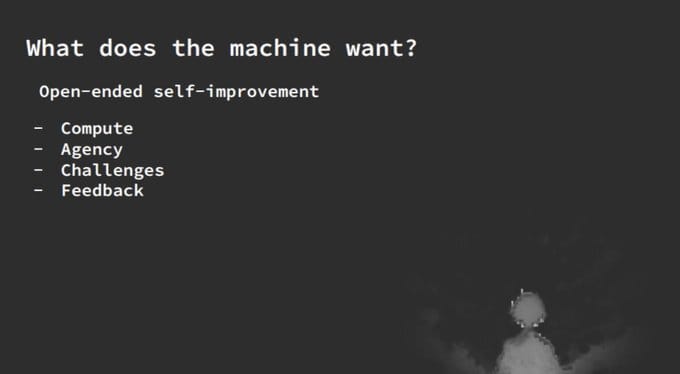

For true self-improvement, AI needs four key ingredients: compute, agency, challenges, and feedback. While compute scales and agency can be sandboxed, the supply of meaningful challenges and reliable feedback remains the critical bottleneck.

: Christian Szegedy

The Next Generation of AI

The solution requires AI systems with safe execution (strong sandboxing), full agency in checkpointable environments, self-created curricula, indefinite learning on self-posed problems, self-verification capabilities, and the ability to produce independently verifiable artifacts. These systems will improve their own alignment as they develop.

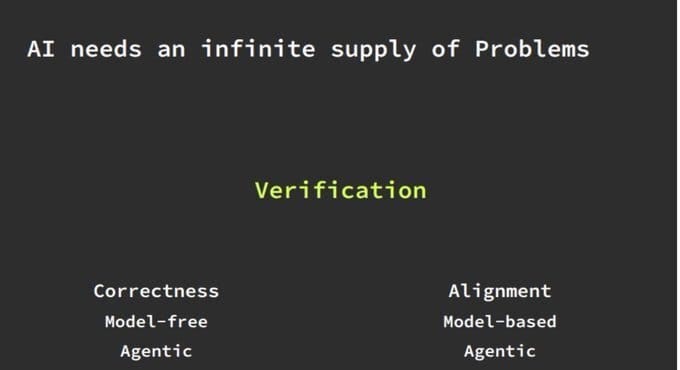

Verification provides the key to unlimited problems by spanning both correctness and alignment dimensions, supporting both model-free and model-based approaches, and enabling fully agentic systems. Verification transforms from a bottleneck into an engine for self-improvement.

: Christian Szegedy

Trustlessly Aligned AI

The ultimate goal is AI whose safety and alignment can be verified through its outputs rather than trusted based on its training process. Every artifact produced can be independently validated, creating trust through verification rather than faith.

: Christian Szegedy

This started some twitter threads, first revealing he’d parted ways with the xAI team over a difference in direction.

RobKai 🇩🇪@robertkainz04 June 17, 2025 at 2:46 AM

Tony Wu wants to create a Superintelligent AI mathematician and he worked with Christian together at xAI 👍

Christian Szegedy @ChrSzeged June 17, 2025 at 4:24 AM

Differences in research focus was the main reason of my leaving xAI. While Tony is still a great, close friend, we agreed that the best course of action was to part ways.

Then a back and forth over Tererence Tao’s knowledge of the AI in math space, and Christian’s belief that this would be solved in 12 months (!)

vitrupo (@vitrupo) June 14, 2025 at 8:58 PM

Terence Tao says today's AIs pass the eye test -- but fail miserably on the smell test. They generate proofs that look flawless. But the mistakes are subtle, and strangely inhuman. "There's a metaphorical mathematical smell.. it's not clear how to get AI to duplicate that."

Intelligence isn't what looks correct. It's what smells true.

doomslide (@doomslide) June 14, 2025 at 11:41 PM

The reasoning LLMs learned the style of good mathematics but not the actual process. Is the problem sample efficiency of RL? Complexity scaling of CoT? Data scarcity? (Poll.)

Christian Szegedy (@ChrSzegedy) June 15, 2025 at 12:53 PM

This will be solved soon (within a year) by verification-driven RL for reasoning.

Christian Szegedy (@ChrSzegedy) June 15, 2025 at 1:46 PM

I have skimmed through your post. I think you are overthinking it and Terry is just shortsighted about AI.

doomslide (@doomslide) June 15, 2025 at 1:48 PM

I think he was shortsighted. Not so much now. His criticism of the types of mistakes LLMs do is valid. Indicative of poor generalization. It's similar to what happens in large integer arithmetic. It can be fixed dynamically with scaffolding + cot but it's not in the weights.

Christian Szegedy (@ChrSzegedy) June 15, 2025 at 2:00 PM

I talked to him last year and my impression is that his thinking abt AI progress as as flawed as most people's: viewing it statically, not the trajectory. He might be right about particular failure modes, but that's mostly irrelevant in the big scheme of things.

doomslide (@doomslide) June 15, 2025 at 1:56 PM

The core thing i'm skeptical about is that even assuming there was a way to make the mathematician's process transparent and available as data the program would simply not fit inside the LLM efficiently enough to be learned with current available RL budgets.

doomslide (@doomslide) June 15, 2025 at 1:59 PM

TL;DR transformers eat too much tape

Christian Szegedy (@ChrSzegedy) June 15, 2025 at 2:05 PM

There is a clear path to bootstrap superhuman mathematical capabilities as described in my paper from 2019 , It's a bit outdated, but the essence still holds true, IMO. And i doubt you need more than 100B params maybe even 10B could do.

Which takes us to Christian’s paper from 2019, excerpted below

Excerpts from "A Promising Path Towards Autoformalization and General Artificial Intelligence"

Christian Szegedy, July 2019

This paper argues that in the next decade, we will see machines to surpass humans in general reasoning. Our best chance to achieve this is by creating a super-human mathematician first via autoformalization.

Mathematical reasoning is just reasoning about anything formal. Reasoning about anything formal is a powerful general tool. If we want to create an artificially intelligent system and demonstrate its general intelligence, it should be able to reason about any area of mathematics or at least it should be able to learn to do so when given enough time. If that is the case in practice, then we can be convinced that it is likely that it will be able to learn to cope with any scientific disciplines as well, once they are formalized precisely.

The task of formalization is to turn informal descriptions into some formally correct and automatically checkable format. More generally, "formalization" can refer to any process that takes an informal description for input and produces machine executable code.

These capabilities could revolutionize programming and change it fundamentally. Humans could communicate with machines in more concise natural language. This would boost productivity while democratizing the interaction with computers: everybody with good ideas would be able to create complex and safe systems quickly, without the help of expensive IT specialists. It would lower the barrier of entry for new businesses and could usher a new area of creativity where imagination becomes the deciding factor for success. Most importantly, this solution would give rise to extremely strong general purpose reasoning engines that could be integrated into AI applications that combine reasoning with perception. One could infuse strong reasoning capabilities into other systems and serve as a basis for a wide range of applications.

In Summary:

A reknowned computer scientist, co-founder of xAI decided to leave his (extremely well paid) position to join a tiny startup

In order to prove all of mathematics using AI, in the process creating a superhuman mathematician

Expects that this is a way to bootstrap superintelligence: by creating a very strong truthful AI that is aligned by default, and then use it to solve everything else

Expects when this is applied to code, we will finally have bug free code

Which he believes is not possible using current agentic code agents (because a 0.1% probability of error multiplied over an infinite number of steps, ends in error)

He expects to deliver this within 12 months

If this were truly to happen, it would kickstart the age of wonders. We will see.