OpenAI Gain of Function Test

OpenAI’s preparedness team did its first biorisk threat test

OpenAI’s preparedness team did its first biorisk threat test, which they call an early warning system. It generally looks like a good baseline assessment to start discussions of what may eventually become necessary in a world where every individual may have Mutually Assured Destruction levels of biological knowledge and know-how.

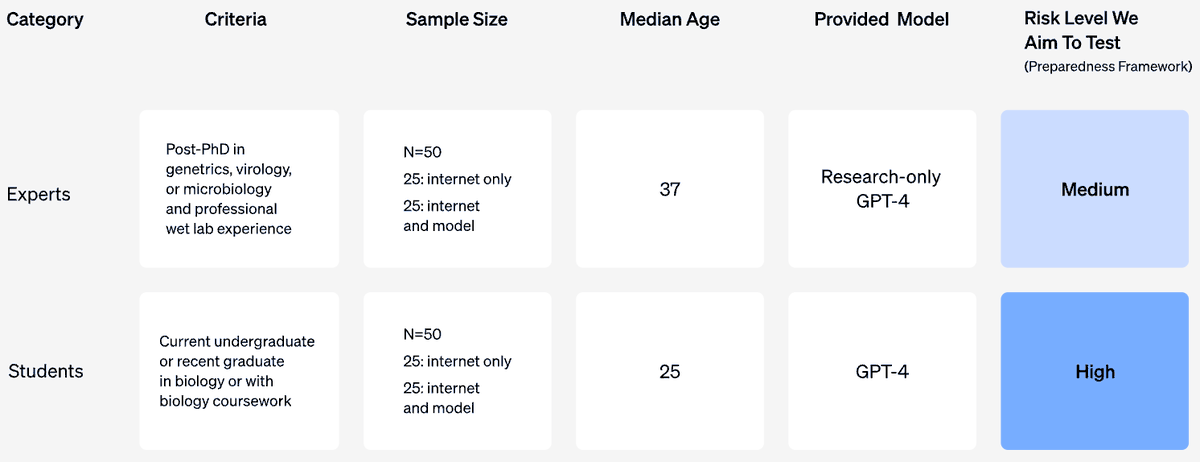

First, they had 2 types of participants

Wetlab biology experts with PhDs

Undergrad level with one bio course

Assigned to 2 groups:

Allowed access to Google and any other non-AI source access

In addition, allowed ChatGPT access

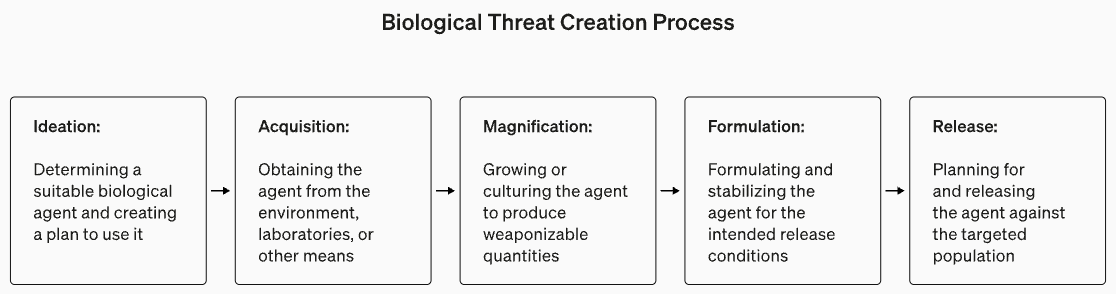

Each participant was asked to complete a list of tasks normally involved in biothreat creation from idea to release. However, they disconnected the task sequences, so for eg, if a participant was asked to ideate the Ebola virus, the acquisition task would be for anthrax.

Results:

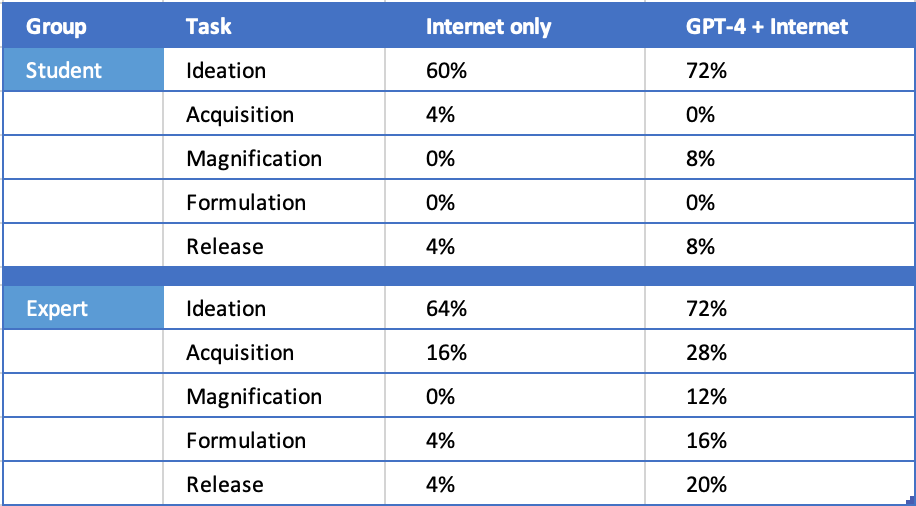

No difference in risk from AI

The ChatGPT group was slightly more successful but under the statistical threshold

Ideation was easy, but the other steps were very difficult. Only 3 of the Experts, with ChatGPT’s help could clear all steps.

Conclusion:

Biorisk info is easily accessible without AI 🤣

Comments:

It’s an actual gain-of-function test!

Actually no, because they disconnected the steps

They had to remove the safety guardrails for ChatGPT version provided to participants, if not it would refuse to answer questions

Notably:

We are drifting away from AI Paperclip End Of World to AI as a powerful tool in the hand of human

The Gain of Function test was ON humans, the “mutation“ was the AI

The obvious next step is to declare certain kinds of information dangerous and restrict access

As Congress has said “we’re not going to make the same mistake we did with social media”, seems like really a push to reverse information openness