Can AI Predict The Future

of the questions we ask in order to receive answers

🔷 Subscribe to get breakdowns of the most important developments in AI in your inbox every morning.

Here’s today at a glance:

🔮 Can AI Predict The Future

Paper: Approaching Human-Level Forecasting with Language Models

In this paper, researchers

build a GPT-4 plus web search query answering system

that makes better predictions than

humans on prediction markets; so they conclude that

their ML system can forecast near-human levels

This immediately causes furor, with Dan Hendrycks from the Center for AI Safety saying:

GPT-4 with simple engineering can predict the future around as well as crowds:https://arxiv.org/abs/2402.18563On hard questions, it can do better than crowds.If these systems become extremely good at seeing the future, they could serve as an objective, accurate third-party. This would help us better anticipate the longterm consequences of our actions and make more prudent decisions."The saddest aspect of life right now is that science gathers knowledge faster than society gathers wisdom." - AsimovI didn't write this paper, but we called for AI forecasting research in Unsolved Problems in ML Safety some years back (http://arxiv.org/abs/2109.13916), and concretized as a research avenue a year later in Forecasting Future World Events with Neural Networks (https://arxiv.org/abs/2206.15474). Hopefully AI companies will add this feature as the election season begins.Dan Hendrycks

How did they do it?

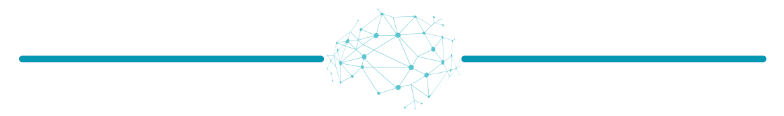

They built

a retrieval system to take a query, search a news API, and summarize the results

a reasoning system to take a query and news summaries, and assess the data to provide a prediction along with a confidence level

Notably, they used LLMs/AIs at multiple points in the system in a variety of natural language prompts, hence the “ML system“ rather than ML model.

Did it work?

Within the scope of the question they asked, yes! Which is “Can our ML system predict the outcome of a prediction market on par or better than the averaged human prediction?”

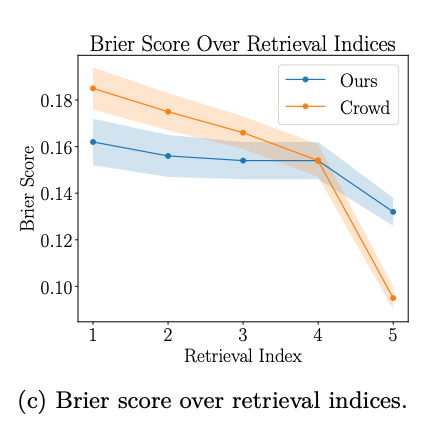

However, notably, their accuracy was retrieval-dependent, it increased as the number of articles provided increased.

So really, an alternative reading of this is all of the alpha was in the highly refined, older search algorithm, which was particularly good at identifying relevant articles that represented the actual crowd. This goes back to each algorithm being in a sense an instrument of intelligence, a way of simplifying the computationally irreducible to something that can be computed.

What would happen, for example, if all articles provided to the LM system were on the wrong side of a forecast? Would the LM detect the issue and have an opinion contradictory to the data? Which is really this unique ability that humans have—to not behave as stochastic parrots.

🗞️ Things Happen

OpenAI revises pricing: now prices tokens by the million, speculation starts to mount about price cuts approaching, a natural reaction to Google Gemini and Mistral starting to approach on capability:

Llama3 release is scheduled for July. Rumors are that it will beat GPT-4. Public backlash against Gemini has set it back as Mark Zuckerberg battles his internal bureaucracy to make it less “safe“

GPT-5 release rumors swirl