2024-09-06: The Schlep is Good

Honeycomb.sh's coding agent achieves 22% accuracy on SWE-Bench through meticulous fine-tuning and iterative error correction, showcasing the dedication required

🔷 Subscribe to get breakdowns of the most important developments in AI in your inbox every morning.

Here’s today at a glance:

The Schlep is Good

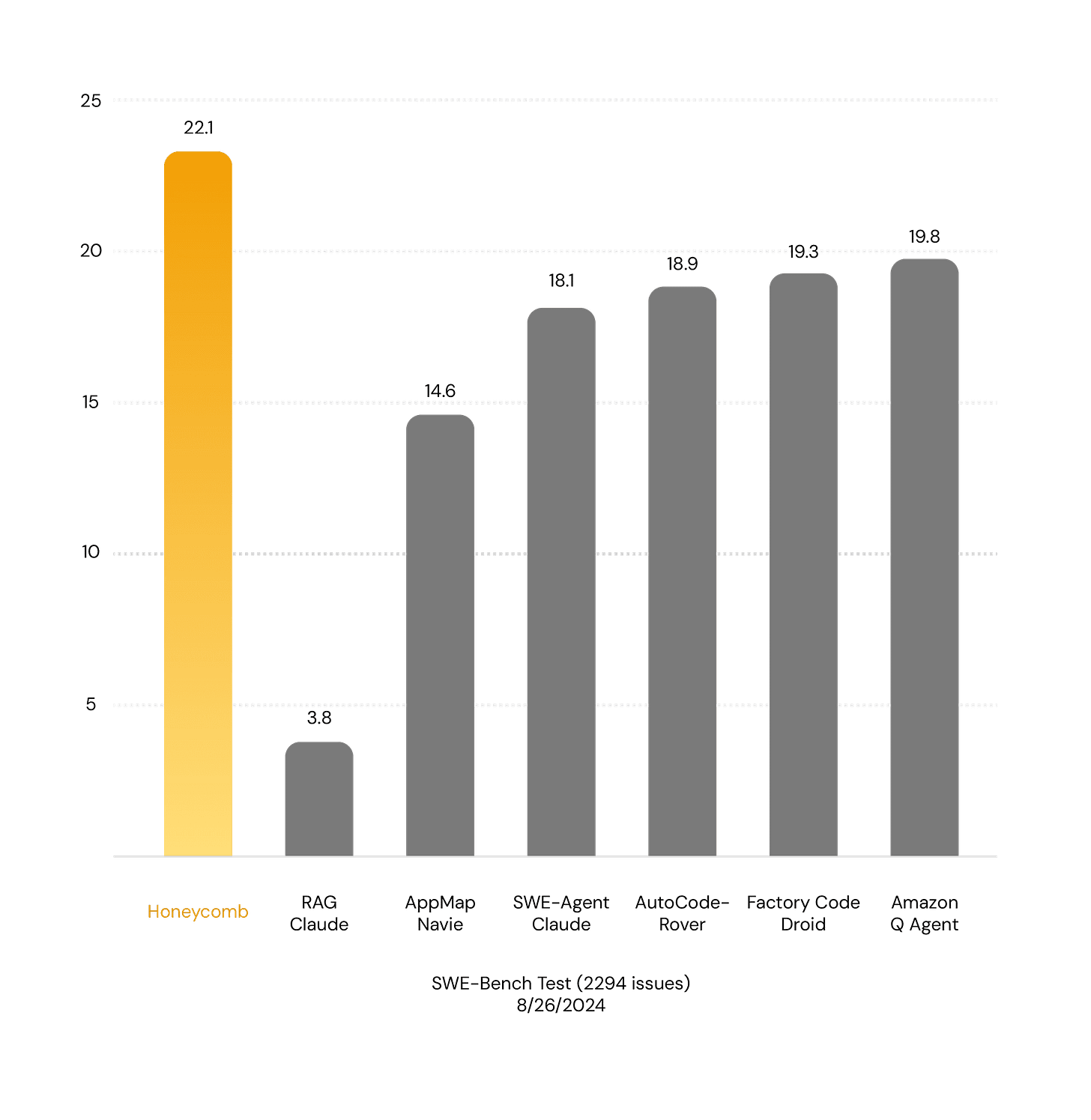

This morning YC startup honeycomb.sh launched. The product is a coding agent. The benchmark most often used in measuring progress is SWE-bench (thats SoftWare Engineering-benchmark for the non-engineers), a collection of 2000+ actual engineering issues drawn from Github repositories.

22% is State of the Art right now, especially since Honeycomb also achieved 40% on the smaller verified version of the test set.

What’s interesting about this?

It’s schlep! As in, they’ve taken an existing foundation model, fine tuned it multiple times for many different subtasks, and slowly chiseled away at every single error case until they’ve been successful in creating a group of agents, specialized AI models that can perform specific tasks well.

Some interesting notes on performance:

Median token usage per patch: 2.6 million tokens!

90th percentile token usage: 11.82 million tokens!

Median solving time: 28 minutes

90th percentile cost (my guess): 25 dollars

Its a whole lot of the model correcting itself over and over again in an endless iterative loop (they hard cutoff at 60 minutes).

Architecture

The best way to explain the architecture is that it’s a task conveyor belt, where multiple models try to progress the task to the next step, with other models ready to handle specific common failure cases, determine success and promote the half done work to the next step, etc.

They resolved a bunch of common issues for eg context window overflow (how many files to send with each API call) with shortcuts and hacks (dropping off the least recently used files). It’s ok but feels.. unsatisfying.

Significance

For 2 MIT dropouts, Andrew Liu and Ishank Agrawal, hacking away together, a tremendous achievement of grind. I can only imagine how painful it was to nail the error cases one by one.

I cannot help shake the feeling that they will get lapped soon though. I expect the agentic workflows to fall with the next OpenAI release.

Full technical report (here)