2024-05-17: Slow Takeoff

AI experts discuss the timeline for achieving Artificial Super-Intelligence, with some predicting its arrival within five years.

🔷 Subscribe to get breakdowns of the most important developments in AI in your inbox every morning.

Here’s today at a glance:

🐢 Slow Takeoff

Dwarkesh spoke to John Schulman, a co-founder of OpenAI, this week. John led the creation of ChatGPT and currently leads the post-training team.

TLDR: AGI 2027; ASI 2029: The Return of Clippy;

John Schulman (OpenAI Cofounder) - Reasoning, RLHF, & Plan for 2027 AGI

Watch now | Chatted with John Schulman (cofounded OpenAI and led ChatGPT creation) on: How post-training tames the shoggoth & enabled GPT-4o AI coworkers in 1-2 years The plan if AGI comes in 2025 Reasoning traces, long horizon RL, & multimodal agents Plateaus & moats

A) AGI timeline 2027 (3 years), ASI timeline 2029 (5 years)

“[AGI] I don't think this is going to happen next year but it's still useful to have the conversation. It could be two or three years instead.”

“Oh, it replaces my job? Maybe five years.”

AGI/ASI both defined as doing autonomous scientific research

Postscript: Twitter pointed out that the job of the leading AI researcher in the world could not be said to require super-intelligence… which is fair, I guess…

B) Capability rollout schedule and product roadmap

2025/2026 - “carry out a whole coding project instead of it giving you one suggestion on how to write a function.. the model taking high-level instructions on what to code and going out on its own, writing any files, and testing it, and looking at the output… iterating“

“They get better at recovering from errors or dealing with edge cases. When things go wrong, they’ll know how to recover from it.“

“Improvement in the ability to do long-horizon tasks might go quite far“

“Will be able to use websites that are designed for humans just by using vision“

“It could be something that's like a Clippy on your computer… Proactivity is one thing that's been missing.“

C) Running out of data is overstated

“I wouldn't expect us to immediately hit the data wall“

“To fix those problems… we found that a tiny amount of data did the trick.. something like 30 examples.“

“[Bigger models are more]… sample efficient: Just a little bit of data or their generalization from other abilities will allow them to get back on track.“

“Just having a bigger model gives you more chances to get the right function.“

D) Building from scratch is complex and only a few orgs can do it. But distilling is easy.

“It's not trivial to spin this up immediately.“

“You can distill the models, or you can take someone else's model and clone the outputs. You can use someone else's model as a judge to do comparisons.”

“it goes against terms of service policies… But some of the smaller players are doing that.. That catches you up to a large extent.“

Opinion

1) Locked in for agents that can plan and do long-horizon tasks within 3 years, and extend that to much longer horizons in 5 years. This is AGI

Surprising to say the least

Seems there is a lot of low-hanging fruit because current data is largely single-turn responses

Definition of AGI in the near term seems more like a cross between a to-do list, a chron job, and a voice chatbot.

2) Views on data are surprising - data wall not that existent.

Small number of samples to tune behavior is very surprising

Indicates more important to identify functionality desired and then collect data necessary than generate large amounts of data

There’s still low-hanging fruit!

3) The 4o launch will provide the last piece of the feedback loop to refine the model towards AGI

Three years to AGI is not a lot of time!

All the pieces have to be in place for the final run

Imagine 4o getting access to your desktop and assisting on projects will provide the data necessary for multi-turn responses

Whatever 4o sees on your screen… it will learn how to do in 3 years

4) Computer science coding, as we know it, has a limited shelf life.

Obviously

Dwarkesh was like a CIA interrogator for nerds trying to figure out timelines: “One year?”, “Two years?”, “Two to three?”, “Five?”

Of Fear

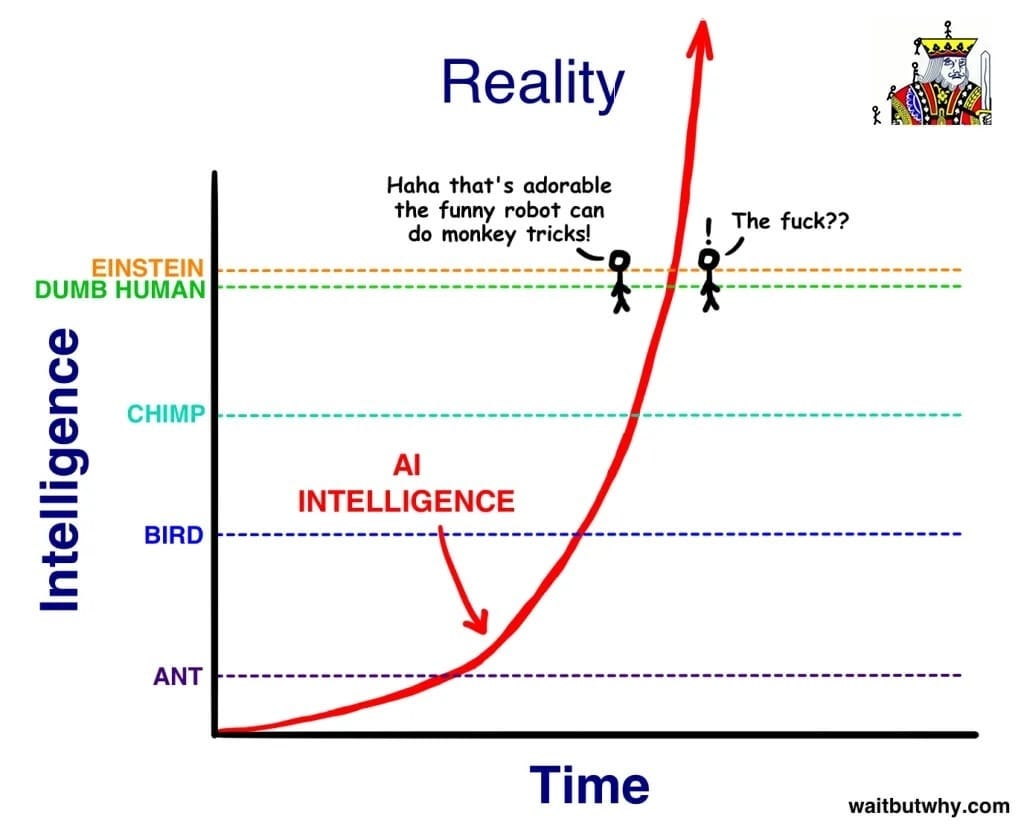

The canonical safetyist argument has always been this wait-but-why diagram, implying that the distance from Dumb Human to Einstein is minuscule in comparison to how quickly AI will improve.

My criticism of this is that rarely do humans achieve anything alone. Most human achievement springs from the collaborative work of groups of enthusiasts, sometimes over centuries. So while exceeding Einstein’s intelligence may happen in the short run, exceeding the intelligence of all humans on the planet combined, augmented by their own computers and intelligent devices, is a much greater task.

This task is made insurmountable if the other factions also have access to near-AGIs. And those are also improving at almost the same rate.

Anyway, the 2029 timeline dovetails neatly with Kurzweil’s predictions, and so is well worth noticing.